SteadyCert

Beautiful graphics for every concept in the CISA Review Manual

Week 3 — Alex Audits a CRM Go-Live

It's Week 3. Alex's manager assigns her to audit Meridian Corp's biggest active project: a new CRM system going live in 6 weeks. The project manager, Dave, is confident. "We're on track," he says. Alex asks to see the project plan, the testing documentation, and the change log. Dave hesitates. "We've been moving fast," he says. "Agile, you know?" Alex opens her notebook. She has 6 weeks to find out whether this system is ready to go live — or whether she needs to stop it.

Project Management

Project Governance & the Charter

Alex asks for the project charter. Dave hands her a PowerPoint deck. There's no scope document, no project sponsor formally signed, and the go-live date was set before requirements were agreed. "Who authorised this project?" Alex asks. Dave points to a two-line email from the VP of Sales. Alex writes her first finding: no formal project charter.

The Project Charter

The formal document that authorises a project and defines its boundaries. Without a charter, there is no formal project — only activity.

Must Include:

- Business objectives & scope

- Project sponsor (executive owner)

- Budget & timeline

- Key milestones & deliverables

Auditor's Checklist:

- Is the sponsor identified & active?

- Were requirements agreed BEFORE go-live date?

- Is there a formal change control process?

- Are roles & responsibilities documented?

Project Governance Structure

- Steering Committee: Strategic oversight

- Project Sponsor: Accountable for business case

- Project Manager: Day-to-day delivery

- IS Auditor: Independent advisor

Key PM Tools

- Gantt chart: Timeline & dependencies

- PERT: Optimistic/pessimistic/expected time

- Critical Path: Longest sequence = minimum duration

- Earned Value: Budget vs. actual progress

The project sponsor (NOT the project manager) is accountable for the business case. ISACA frequently asks "who is MOST responsible for project success?" — the answer is the sponsor. The PM manages delivery; the sponsor owns the business outcome. Also: the auditor's greatest concern is a project without a formal charter — no charter means no defined scope, no accountability, and no basis for change control.

The FBI's Sentinel case management system was originally budgeted at $425 million. After years of poor project governance — no formal charter, unclear ownership, scope creep — it was cancelled and restarted, ultimately costing $451 million and taking 6 years longer than planned.

Reveal Answer

Reveal Answer

Reveal Answer

Alex now knows the project has no charter. But she needs to understand what they actually built — and whether they followed any process at all.

SDLC Phases

The System Development Life Cycle

Alex maps what Meridian actually did against what they should have done. They skipped formal requirements sign-off, went straight from concept to coding, and are now doing design while simultaneously testing. It's like building a skyscraper where one team is still pouring the foundation while another is already painting the top floor. "When was the design approved?" she asks. Dave stares at her blankly.

Auditor Involvement in SDLC

The IS auditor should be involved in every phase of the SDLC — from planning through maintenance. Early involvement reduces the cost of fixing issues later. The auditor acts as an independent advisor, not a project team member.

The auditor should participate in every SDLC phase but must never become part of the development team (independence). The cost of fixing defects increases exponentially the later they are found — this is why early auditor involvement is critical. If the exam asks "when should the auditor first get involved?" the answer is always the EARLIEST phase — planning/feasibility.

The Healthcare.gov launch failure (2013) resulted from skipping critical SDLC phases — integration testing between 33+ vendor systems was never properly completed before launch. The site failed under real load on Day 1, affecting 600,000 users.

Reveal Answer

Reveal Answer

Reveal Answer

Alex has mapped the timeline. Now she needs to understand what methodology — if any — the team was following.

Development Methodologies

Waterfall vs. Agile vs. Spiral

Dave says they're doing Agile. Alex asks to see the sprint backlog, sprint reviews, and retrospectives. There are none. They're doing something. It isn't Agile. It isn't Waterfall either. Alex writes in her notebook: "Ad hoc development. No defined methodology. No sprint cadence. No documented iterations." She underlines it twice.

Waterfall Audit Focus

- Are phase gates enforced?

- Is sign-off required before moving to next phase?

- Complete documentation at each stage?

Agile Audit Focus

- Are user stories traced?

- Is there a product backlog?

- Are sprint reviews documented?

Spiral Audit Focus

- Is risk analysis done each cycle?

- Are prototypes validated?

- Is progress measurable?

Agile prioritises working software OVER comprehensive documentation — but NOT instead of it. Critical controls, security requirements, and audit trails are still required in Agile environments. The exam will try to trick you with "Agile doesn't need documentation" — that's always wrong. Also: match the methodology to the scenario. Stable requirements = Waterfall. Evolving requirements = Agile. High-risk, large project = Spiral.

Many organisations claim to "do Agile" without sprints, backlogs, or retrospectives — what researchers call "Dark Scrum" or "Scrumbut." A 2022 survey found 58% of teams claiming Agile had no sprint reviews. Claiming a methodology without following its practices provides no audit assurance.

Reveal Answer

Reveal Answer

Reveal Answer

No methodology. No charter. Alex now digs deeper — was there even a proper business case for this CRM?

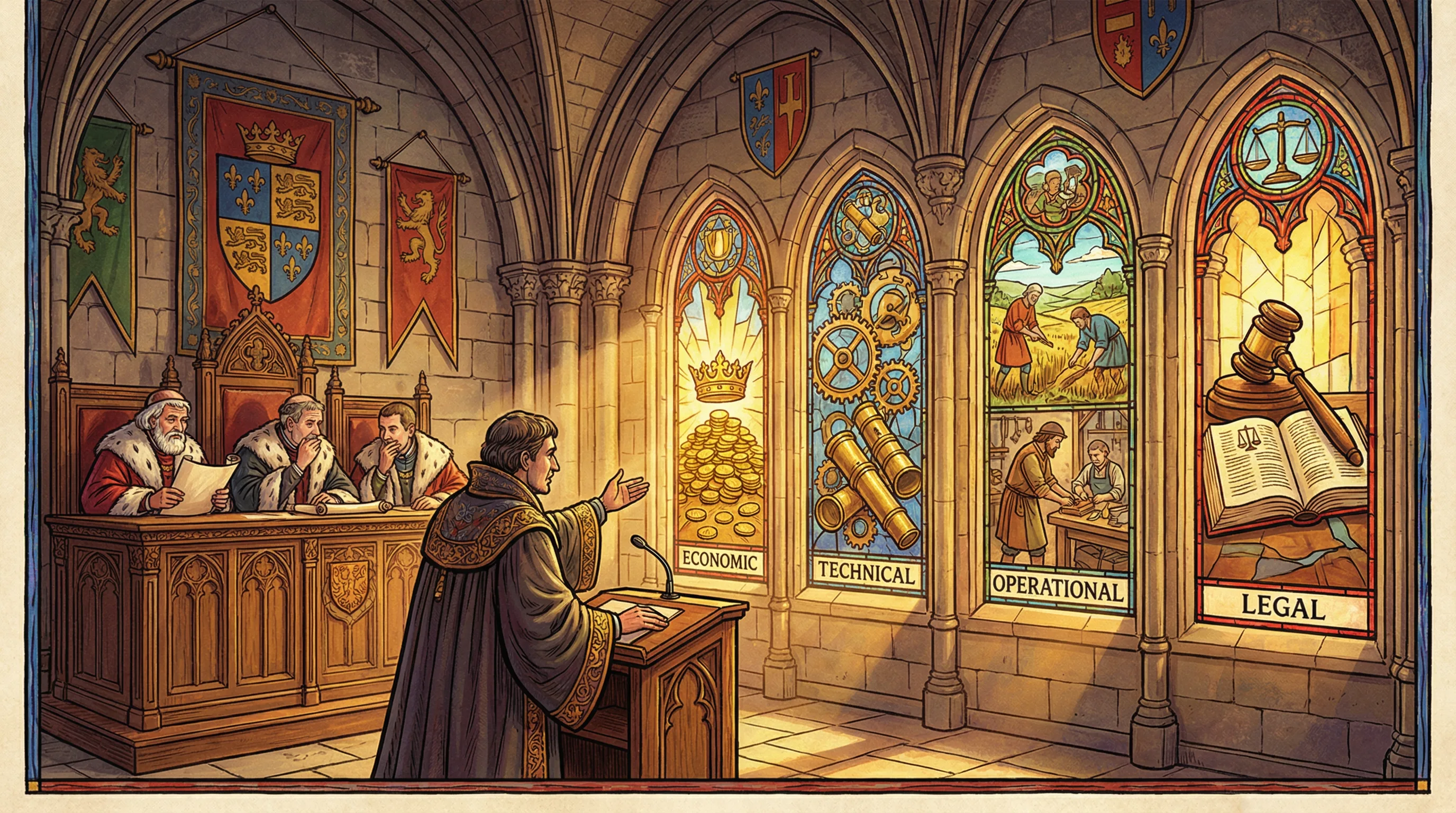

Business Case & Feasibility

The Four Tests of Feasibility

The CRM was approved based on a two-paragraph email from the Sales Director. No economic feasibility analysis. No technical feasibility review. No assessment of whether Meridian's infrastructure could support it. "Where's the business case?" Alex asks. Dave says, "The Sales Director wanted it. That was enough." Alex writes: "No formal feasibility study conducted for any of the four dimensions."

Economic Feasibility

Can we afford it? Will it generate ROI?

- Cost-benefit analysis (CBA)

- ROI, NPV, IRR calculations

- TCO (Total Cost of Ownership)

- Payback period

Technical Feasibility

Can we build it with available technology?

- Technology readiness

- Staff skills available

- Infrastructure capacity

- Integration complexity

Operational Feasibility

Will users adopt and operate it?

- User acceptance likelihood

- Process change impact

- Training requirements

- Organizational readiness

Legal Feasibility

Does it comply with laws & regulations?

- Regulatory compliance

- Licensing requirements

- Privacy & data protection

- Contractual obligations

A feasibility study should be completed BEFORE the project begins. All four types must be assessed — economic feasibility alone is not sufficient. A project can be economically attractive but technically impossible or legally prohibited. When the exam asks "which feasibility type is MOST important?" — it's a trick. The answer is: all four are required; no single type is sufficient.

Kodak's digital camera technology was invented internally in 1975. The business case for investing in digital was rejected because it would cannibalise film revenue. The failure to conduct honest feasibility analysis contributed to Kodak's 2012 bankruptcy.

Reveal Answer

Reveal Answer

Reveal Answer

No business case, no feasibility analysis. Alex turns to the next question: did anyone actually document what this CRM is supposed to do?

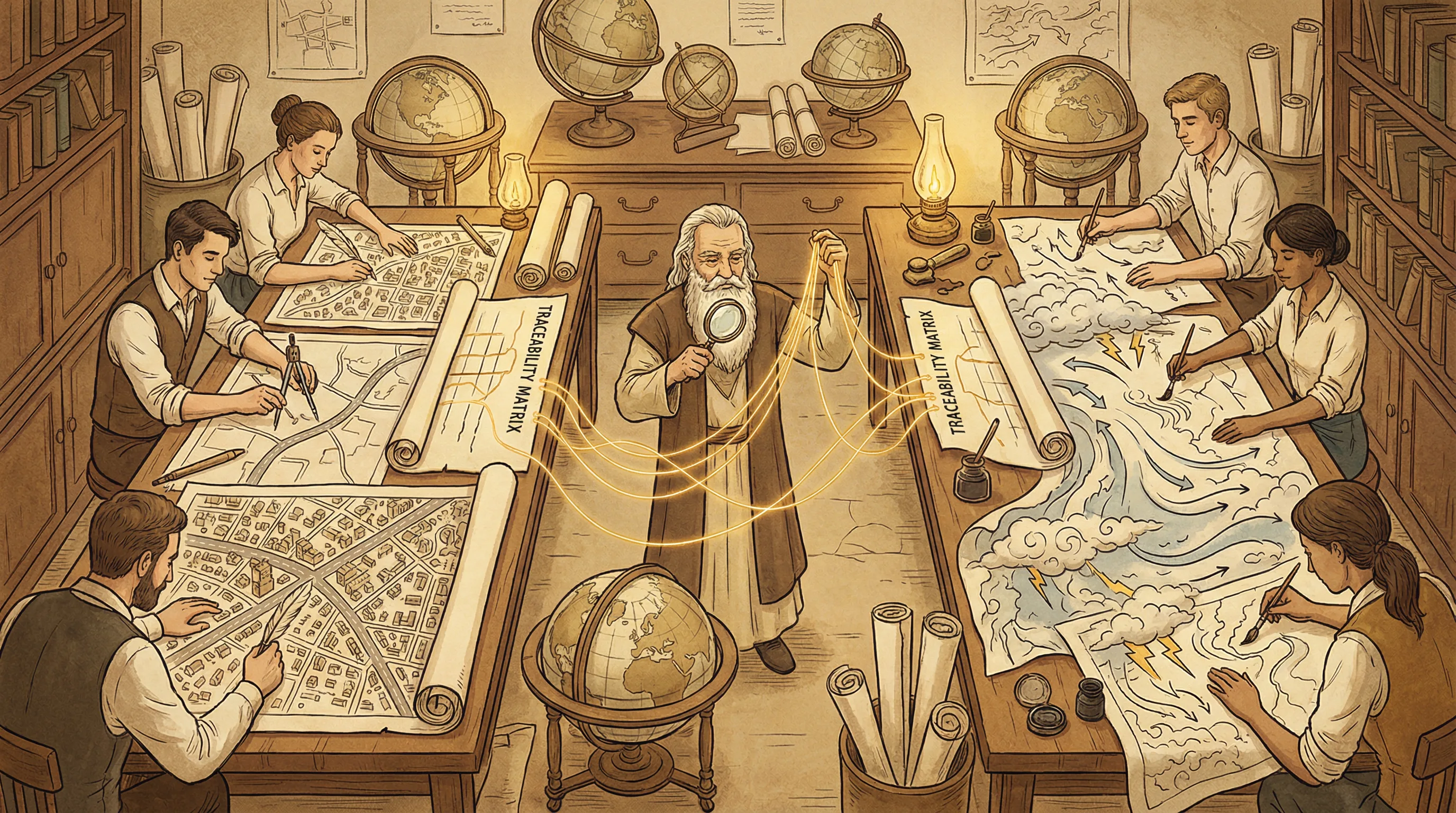

Requirements Management

Requirements & Traceability

Alex asks for the requirements document. Dave produces a 200-page spreadsheet. She asks which requirements have been signed off by the business. Dave points to an email thread. From 8 months ago. With people who have since left the company. "So nobody currently at Meridian has formally approved these requirements?" Alex asks. The silence tells her everything.

Functional Requirements

"What the system does"

- Business rules & logic

- Data processing operations

- User interactions & workflows

- Reports & outputs

Non-Functional Requirements

"How the system performs"

- Performance & speed

- Security & access

- Availability & uptime

- Scalability & capacity

Traceability Matrix

A traceability matrix maps each requirement to its corresponding design element, test case, and implementation. It ensures nothing is missed and provides an audit trail from requirement to delivery.

The traceability matrix is the auditor's best friend — it provides evidence that every requirement was designed, built, and tested. If the exam asks what an auditor should review to ensure completeness, the traceability matrix is usually the answer. Also: requirements must be signed off by BUSINESS USERS, not just the development team.

The Denver International Airport baggage system (1995) famously failed because requirements were added and changed throughout development without formal sign-off. The $186 million system was 16 months late and still malfunctioned on opening day.

Reveal Answer

Reveal Answer

Reveal Answer

Requirements without sign-off. Alex turns to the design — if there even is one.

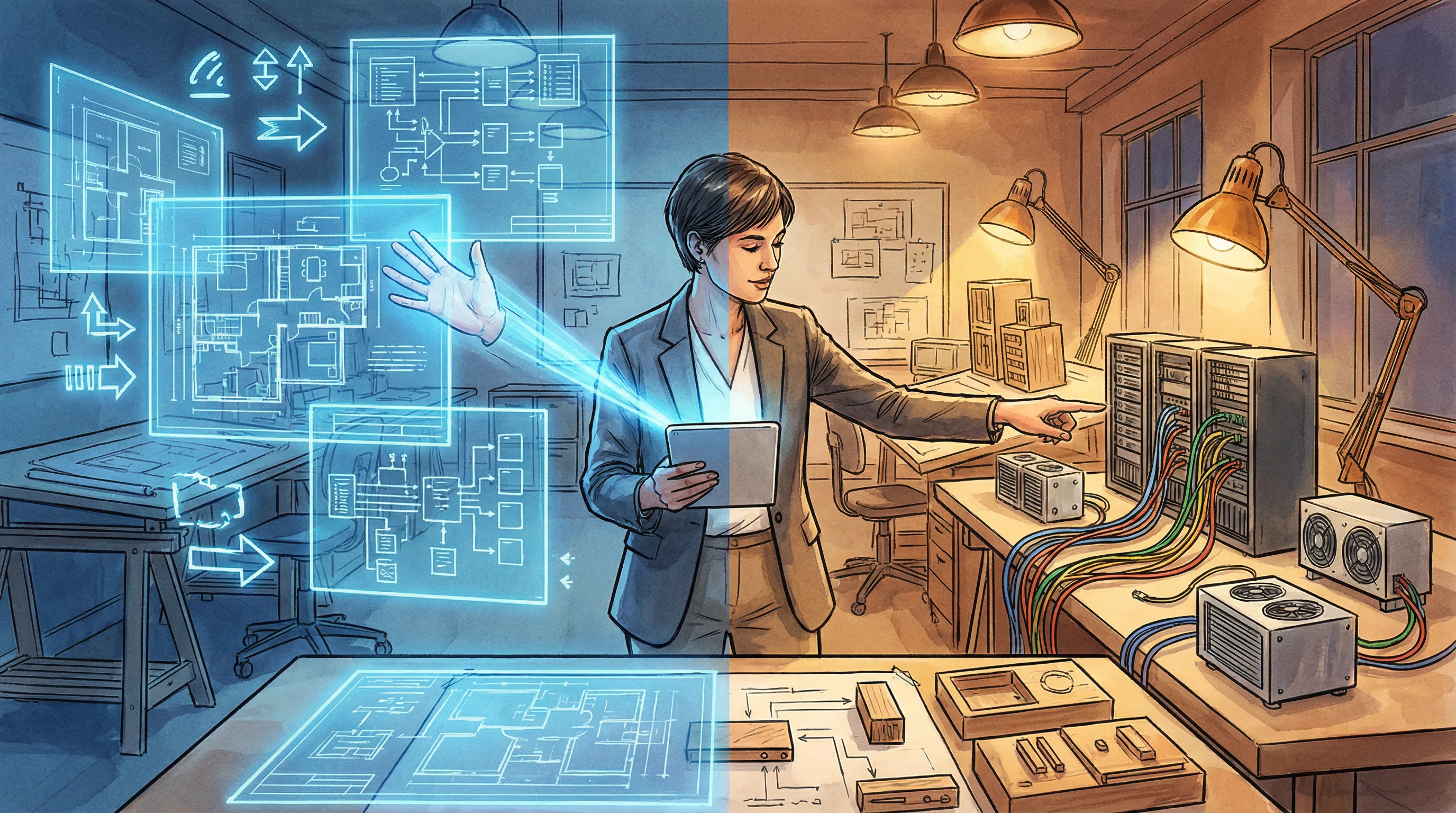

System Design

Logical vs. Physical Design

The logical design exists — Alex finds data flow diagrams and entity-relationship models. But the physical design — how it actually maps to Meridian's infrastructure, databases, and network — was never formally documented. The developers are building from memory. "Where's the physical architecture document?" Alex asks. Dave shrugs. "It's in their heads." Alex writes: "No formal physical design. Single point of knowledge failure."

Logical Design

"What the system does — abstractly"

- Data flow diagrams (DFDs)

- Entity-Relationship diagrams (ERDs)

- Process models

- Technology-independent

Physical Design

"How the system is built — concretely"

- Hardware specifications

- Network architecture

- Database schema

- Technology-specific

Data Flow Diagram (DFD)

Shows how data moves through a system. Uses processes, data stores, external entities, and data flows.

Entity-Relationship Diagram (ERD)

Models data entities and their relationships. Foundation for database design.

Decision Table / Tree

Maps complex business rules to outcomes. Ensures all conditions are covered.

Logical design comes BEFORE physical design. An IS auditor should verify that the logical design is approved by users before physical design begins. DFDs show data flow, not control flow — know the difference. And remember: if the physical design only exists "in people's heads," that's a key-person dependency and a critical risk.

The Ariane 5 rocket explosion (1996) was caused by reusing software from Ariane 4 without verifying it against the new physical design parameters. A 64-bit number was converted to a 16-bit integer, causing overflow. The $370 million rocket exploded 37 seconds after launch.

Reveal Answer

Reveal Answer

Reveal Answer

Logical design exists, physical design doesn't. Alex moves to the question that keeps her up at night: has this CRM been properly tested?

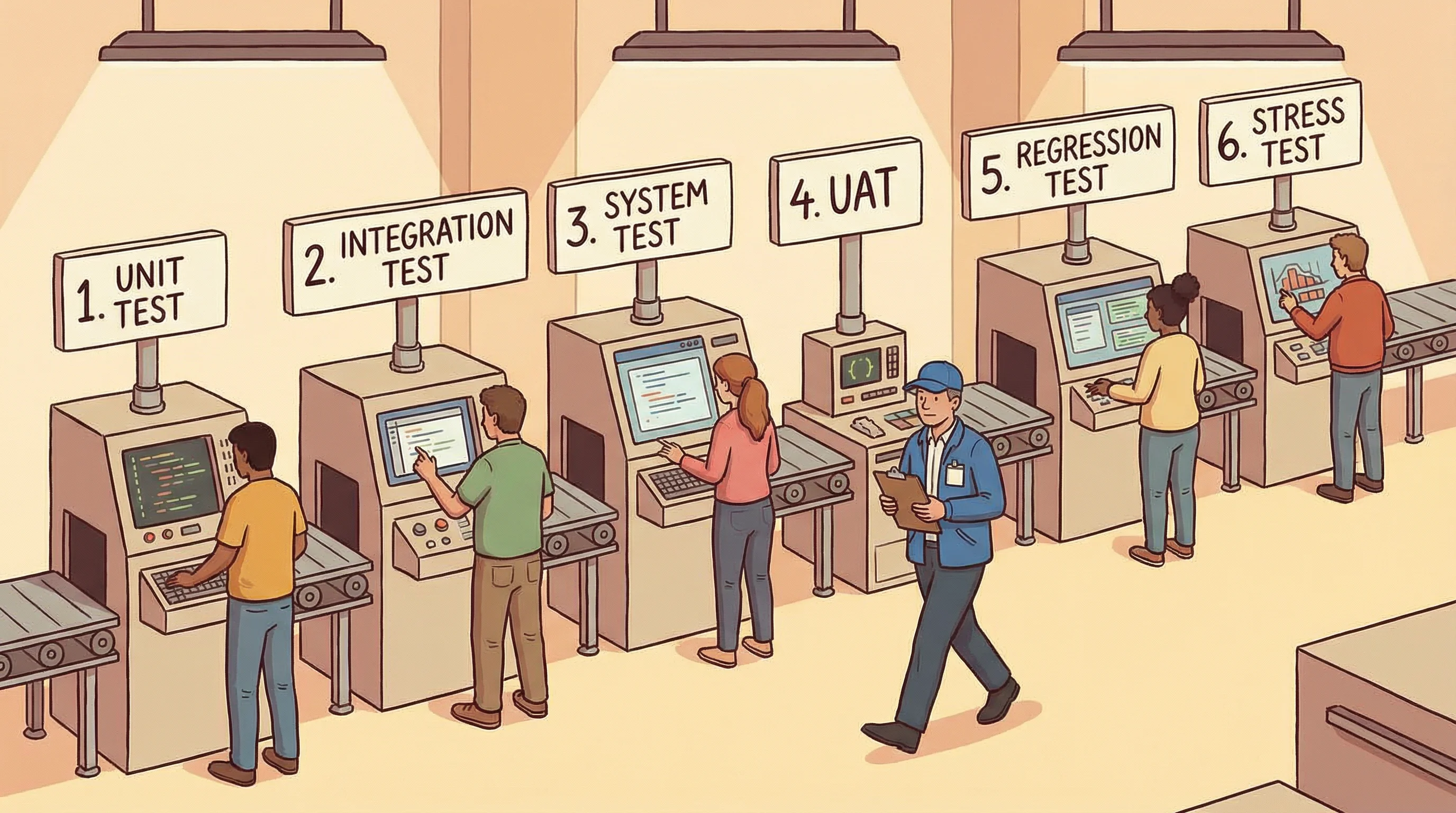

Testing Types

The Six Testing Stations

Alex asks for the test plan. Unit testing was done. Integration testing... "mostly." System testing is scheduled for next week. UAT hasn't been planned yet. There's no regression test plan. The go-live is in 6 weeks. Alex stares at the whiteboard. Four of six testing stations haven't been completed, and the team thinks they're on track. She circles "UAT: NOT PLANNED" in red.

Unit Testing

Who: Developers

Tests individual modules or functions in isolation. Smallest testable part.

Integration Testing

Who: Developers

Tests how modules work together. Verifies interfaces between components.

System Testing

Who: QA Team

Tests the complete, integrated system against requirements. End-to-end validation.

UAT (User Acceptance)

Who: End Users

Final validation by actual users. Confirms the system meets business needs.

Regression Testing

Who: QA Team

Re-tests after changes to ensure existing functionality still works correctly.

Stress / Load Testing

Who: Performance Team

Tests system under extreme conditions — peak load, volume, concurrency.

Testing Progression: Small → Large

UAT is performed by END USERS, not developers or QA. It is the FINAL test before go-live and must be signed off by the business owner. A system that passes unit testing is NOT ready for go-live — all testing stages are required. Also: UAT is MANAGEMENT's responsibility — the auditor verifies it was done adequately, not plans or executes it.

The Boeing 737 MAX MCAS system underwent limited system testing and no real-world failure mode testing. Two crashes killed 346 people. Post-investigation, it emerged that testing had not covered scenarios where a single sensor failed.

Reveal Answer

Reveal Answer

Reveal Answer

Testing is incomplete. But there's something even more alarming in the project log — changes that nobody approved.

Change Management

The Change Control Process

Alex finds 47 change requests in the project log. Twelve have been implemented. None went through the change advisory board. Two changed the core data model without anyone updating the system design documents. "Who approved these changes?" Alex asks. Dave says, "We just did them. The developers know what they're doing." Alex's pen stops. Uncontrolled changes are the single fastest way to kill a system.

Request

Submit formal change request with justification

Assess

Impact analysis: risk, cost, schedule, dependencies

Approve

CAB (Change Advisory Board) reviews & approves

Test

Test in non-production environment first

Deploy

Implement change with rollback plan ready

Review

Post-implementation verification & close

Emergency Changes

- Bypass normal approval (pre-authorized)

- Must be documented retroactively

- Reviewed by CAB after implementation

- High audit risk — watch for abuse

Rollback Plan

- Must exist BEFORE deployment

- Tested and validated

- Defines criteria for triggering rollback

- Includes data restoration procedures

ALL changes must go through change management — including emergency changes (documented after the fact). In IS audit, change management means the formal process for requesting, approving, testing, and implementing system changes — NOT change psychology or organisational change management. The auditor's greatest concern is unauthorized changes that bypass the process entirely.

The Knight Capital $440 million trading loss (2012) was triggered by a change management failure — a software deployment activated legacy code that should have been disabled. There was no rollback plan, no change advisory board review, and no post-deployment verification. The company lost $440 million in 45 minutes.

Reveal Answer

Reveal Answer

Reveal Answer

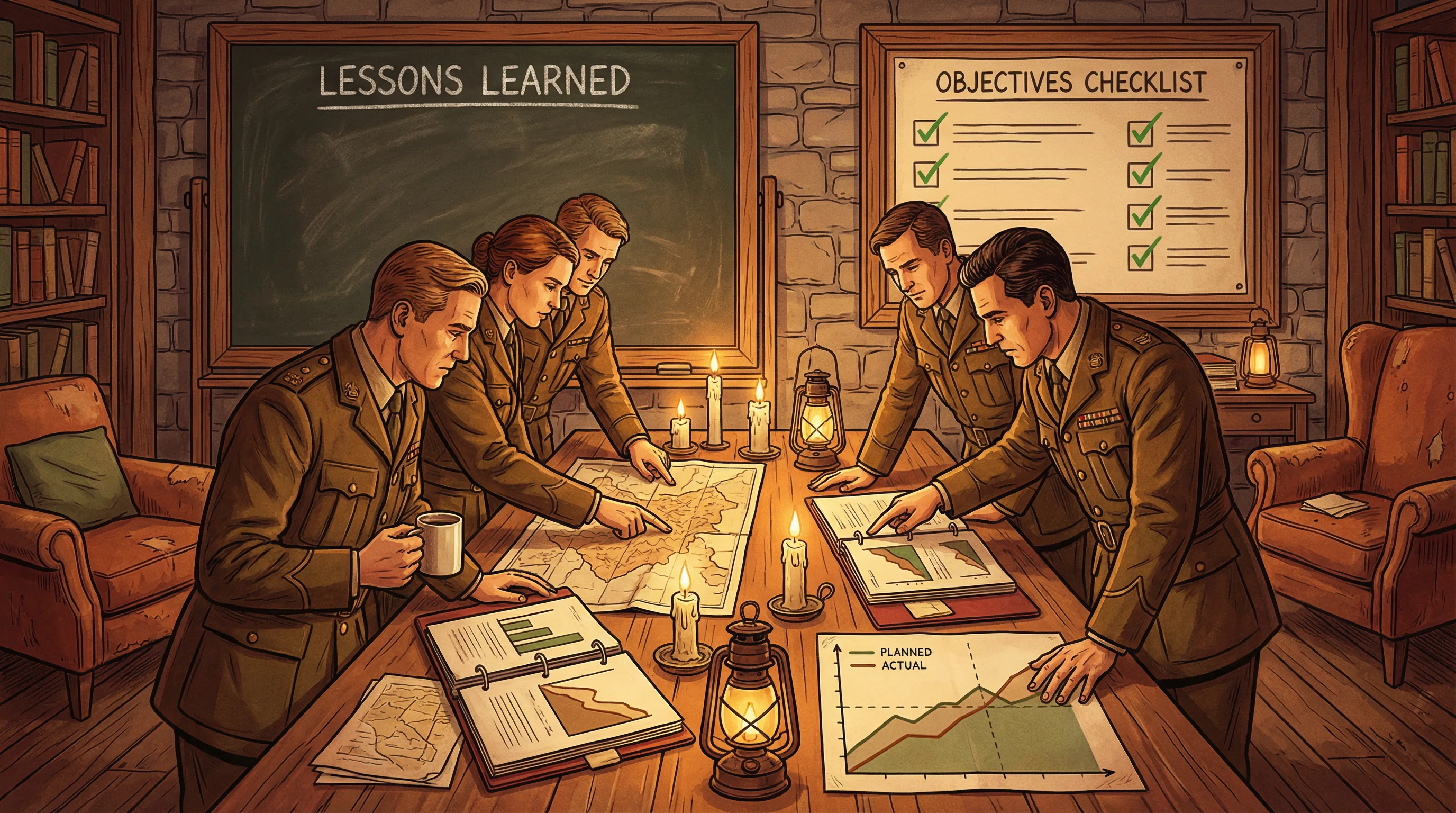

47 changes, no approvals. Alex wonders: does Meridian ever look back at what went wrong? She checks for post-implementation reviews.

Post-Implementation Review

Post-Implementation Review (PIR)

The last system Meridian launched — a billing platform, 18 months ago — has no PIR. Alex asks why. "Once it went live, we moved on," says Dave. Alex pulls up the billing system's incident log. Fourteen critical bugs in the first three months. Three data integrity issues still unresolved. "These are the same types of problems you're about to repeat with the CRM," she says. No lessons captured, same mistakes repeated.

Were Objectives Met?

Compare actual outcomes against the original business case and project charter goals.

Cost & Schedule Review

Compare actual costs and timelines against estimates. Identify variances and root causes.

Lessons Learned

Document what went well and what didn't. Feed improvements into future projects.

User Satisfaction

Survey end users. Are they using the system? Does it solve their problems? Any workarounds?

PIR Timing

Typically conducted 3-6 months after go-live — enough time for the system to stabilise and for meaningful data to be collected. Conducting it too early misses long-term issues; too late and the lessons are forgotten.

A PIR should compare actual results to the ORIGINAL business case, not to revised expectations. PIR should happen after a stabilisation period (typically 3-6 months) — too early and you can't see real operational performance. The IS auditor should verify that a PIR was conducted AND that lessons learned were documented and communicated for future projects.

NASA conducts post-implementation reviews after every mission. The Columbia disaster (2003) investigation revealed that lessons from the Challenger disaster (1986) had not been institutionalised — a PIR without action is a PIR without value.

Reveal Answer

Reveal Answer

Reveal Answer

No lessons learned. Alex turns to a different question: how did Meridian even select this CRM software in the first place?

Acquisition & Procurement

Procurement & Vendor Selection

The CRM software was selected by Dave after a single vendor demo. No RFP. No vendor comparison. No contract review by legal. Alex pulls up the licence agreement — and finds an auto-renewal clause nobody noticed. The contract renews automatically in 90 days at a 15% price increase. "Did legal review this?" she asks. Dave says, "We didn't think we needed to." Alex adds three more findings to her growing list.

Define Needs

Requirements & evaluation criteria

RFI / RFP

Request information or formal proposals

Evaluate

Score vendors using weighted criteria

Select

Choose vendor & negotiate contract

Contract

SLA, penalties, escrow, IP rights

RFI vs. RFP vs. RFQ

- RFI — Request for Information: exploratory, learning about vendors

- RFP — Request for Proposal: detailed requirements, formal bid

- RFQ — Request for Quotation: price-focused, specs are known

Software Escrow

- Source code held by neutral third party

- Released if vendor goes bankrupt

- Protects buyer's investment

- Critical for custom / proprietary software

Key Contract Elements to Audit

- SLAs & performance metrics

- Penalty clauses

- IP ownership & licensing

- Right-to-audit clause

- Data protection & privacy

- Exit / termination terms

Always verify that vendor evaluation uses predefined, weighted criteria — not just the lowest price or a single demo. A signed contract is necessary but NOT sufficient — SLAs must be monitored, performance reviewed, and penalties enforceable. The contract should include a right-to-audit clause giving the organisation access to the vendor's controls.

The UK's NHS IT programme (2003-2011) selected vendors without competitive RFP processes in some cases. The £10 billion programme was eventually abandoned after massive cost overruns and failed deliveries.

Reveal Answer

Reveal Answer

Reveal Answer

No RFP, no legal review, an auto-renewal trap. Alex decides to test the CRM herself — and what she finds is alarming.

Application Controls

Input, Processing & Output Controls

Alex runs three test transactions through the CRM. One allows a negative invoice amount. One accepts a customer name field with SQL injection characters. The third creates a duplicate customer record with no warning. Input validation: failing on all three tests. "Has anyone tested the input controls?" she asks. The answer is written on every developer's face: no.

Input Controls

Garbage in = garbage out

- Field validation (type, range, format)

- Check digits (e.g., Luhn algorithm)

- Sequence checks

- Duplicate detection

- Authorization of data entry

Processing Controls

Ensure accurate transformation

- Run-to-run totals

- Reasonableness checks

- Limit checks

- Reconciliation of control totals

- Exception reporting

Output Controls

Right data to right people

- Report distribution lists

- Output reconciliation

- Retention & disposal rules

- Spooling controls

- Print queue security

Edit Controls — The Input Gatekeepers

- Validity check — Is the value in the allowed set?

- Range check — Is the value within limits?

- Reasonableness check — Does it make sense?

- Check digit — Is the number valid?

- Completeness check — Are all fields filled?

- Duplicate check — Already entered?

Input controls are the MOST important application controls — preventing bad data from entering is more effective than catching errors during processing. Know the edit control types: validity, range, reasonableness, check digit, completeness, and duplicate checks. If the exam asks "which control would BEST prevent [bad data scenario]?" — look for the matching input control type.

The 2012 Knight Capital loss was partly enabled by missing application controls — the system had no hard limits on trade volume that would have stopped the runaway algorithm. Input controls exist to prevent exactly this kind of catastrophic error.

Reveal Answer

Reveal Answer

Reveal Answer

Failing input controls. But there's one more critical concern: Meridian is about to migrate 50,000 customer records into this broken system.

Data Conversion & Migration

Data Migration & Integrity

50,000 customer records are being migrated from the old system. The migration script has been run twice in testing. Both times, 340 records failed silently — no error log, no alert. Nobody knew. Alex asks, "How do you know all the records migrated correctly?" Dave says, "We checked the total count." Alex pulls up the data. The count matches. But 340 records have corrupted phone numbers, 12 have truncated addresses, and 3 customer records point to the wrong account. "Counts match" doesn't mean "data is correct."

Plan

Define scope, mapping rules, timeline

Extract

Pull data from old system

Transform

Cleanse, map, convert formats

Load

Import into new system

Verify

Reconcile & validate integrity

Data Integrity Checks

- Record counts — Same number of records before and after

- Control totals — Sum of key fields matches

- Hash totals — Meaningless sums that must match

- Sampling — Spot-check individual records

Common Migration Risks

- Data loss during extraction

- Format incompatibilities

- Truncation of field values

- Referential integrity violations

- Missing or corrupted records

Parallel Run Strategy

Running both old and new systems simultaneously for a period. Outputs are compared to verify the new system produces the same results. This is the safest but most expensive implementation approach.

Parallel

Safest, most costly

Phased

Gradual rollout

Pilot

Test group first

Big Bang

All at once, highest risk

Data integrity is the auditor's #1 concern during migration. Record counts alone are NOT sufficient — you must also verify control totals, hash totals, and spot-check individual records. Parallel running is the safest implementation approach; big bang is the riskiest. Data migration is complete when all records are transferred AND verified for completeness, accuracy, and integrity — silent failures are the most dangerous.

During the TSB Bank migration (2018), 1.3 billion records were migrated from Lloyds Banking Group's platform to a new system. Due to data integrity failures during migration, 1.9 million customers were locked out of their accounts for weeks.

Reveal Answer

Reveal Answer

Reveal Answer

Alex recommends: delay the go-live.

Six weeks. Twelve sections of her audit workpaper. Seventeen findings, four of them critical. No charter. No methodology. No feasibility study. Unsigned requirements. Undocumented design. Incomplete testing. Uncontrolled changes. No PIR history. No competitive procurement. Failing input controls. Silent data migration failures. Alex presents her findings to the steering committee. Dave is quiet. The CIO nods. "We'll delay the go-live until these are addressed." Alex didn't make the decision — but her evidence made the decision inevitable.

Top 10 Exam Traps — Domain 3

✓ Agile prioritises working software OVER comprehensive documentation — not instead of it. Critical controls documentation is still required.

✓ The project sponsor (business owner) is accountable for the business case — the PM manages delivery.

✓ UAT is MANAGEMENT's responsibility — the auditor verifies that it was done adequately, not plans or executes it.

✓ Unit testing only validates individual components. Integration, system, and UAT testing are all required before go-live.

✓ In IS audit, change management means the formal process for requesting, approving, testing, and implementing system changes — not change psychology.

✓ Outsourcing transfers execution — management retains accountability for project success and deliverable quality.

✓ Contract existence is necessary but not sufficient — SLAs must be monitored, performance reviewed, and penalties enforceable.

✓ All four feasibility types matter: Economic, Technical, Operational, AND Legal. A project can be economically attractive but technically impossible.

✓ PIR should happen after a stabilisation period (typically 3-6 months) — too early and you can't see real operational performance.

✓ Data migration is complete when all records are transferred AND verified for completeness, accuracy, and integrity — silent failures are the most dangerous.